April 11, 2022

Posted by Jeremy Hess

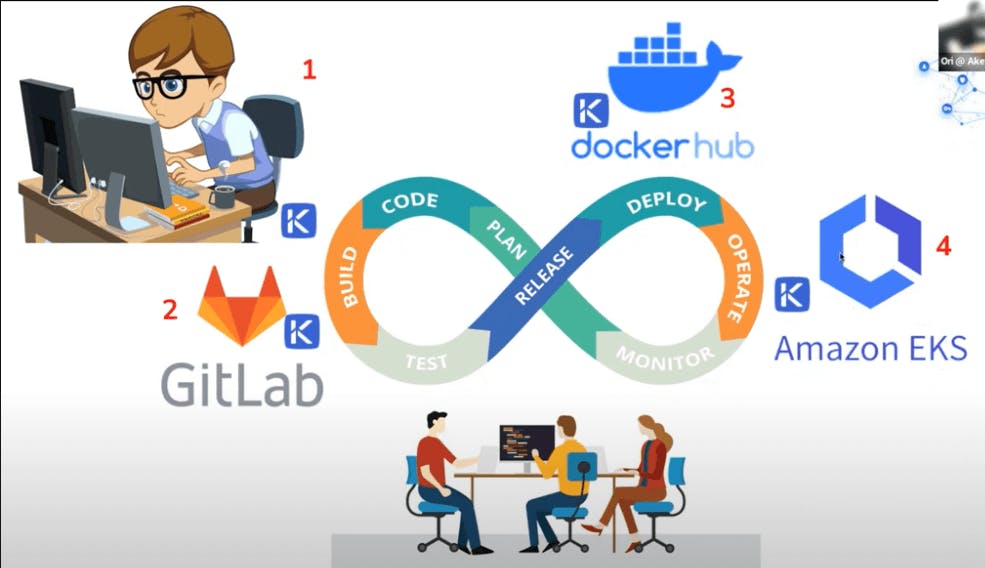

In this demo, VP R&D Ori Mankali gives us a look into a simple application that uses GitLab in which he builds and deploys a Docker image to Docker Hub and uses that to pull the application, which is running on Amazon EKS.

The demo will show the initial state of the application and then push changes which will trigger a new workflow that will update the application all using just-in-time access with different types of secrets from SSH certificates to JWT tokens and more.

Ultimately, we learn how Akeyless Vaultless® Platform enables you to ensure no static passwords are used when building and maintaining your DevOps pipeline.

Watch the video below

TRANSCRIPT

Hello everyone, my name is Ori, I’m a VP of R&D at Akeyless. Today, I’m going to talk about how to get your production environment without any standing privileges. So in today’s agenda, we’re going to explain or maybe introduce you to some of the things that we’re doing in Akeyless, talk about recent trends and motivation for that topic today.

The evolution of modern computing that led the existing security problems to become much more severe in different environments. And maybe a little bit about how a secret management platform like Akeyless is providing, can be a good solution for that, in combination with the ability to generate just-in-time credentials or just-in-time access to certain production environments, which kind of eliminates the need to use static secrets or static credentials.

In the last couple of years, we’ve seen a clear trend, mostly from the digital transformation that led to so many organizations to move to the cloud, the workload to the cloud. The use of cloud-native technologies like containers and orchestration. The auto-scaling or the elasticity of the cloud, different DevOps tool chains. All of that has basically made a lot of applications, ephemeral applications, to run in different environments.

The main challenge is that applications require a certain set of credentials to do whatever they were designed to do. One example that I have in mind is that you have some kind of a back-end application that needs to communicate with the database. In order to authenticate to the database, you need credentials, and those are typically stored in a static fashion, meaning that they are stored in a persistent configuration, or even in workspace, inside the code. This means that those are standing in a way that a potential hacker could just grab them and use them anywhere they want, without anyone knowing or noticing that.

This could later be used for what is described as lateral movement, maybe gathering initial credentials and then accessing the database to get more information, and from there, to other hosts or other devices and so on and so forth. The SDLC, the software development lifecycle, is becoming much more advanced than it used to be in the last years. First of all, because the CI/CD pipelines are today de facto standards, you have lots of Git repos, most of them are cloud services, and then this means that your code is not residing on your host in your own on-prem data center, and you have lots of orchestrations that also require access to configuration files, and the actual code execution and so on.

This, again, opens a lot of potential attack vectors for different hackers. In all of those environments, starting from the registry of containers to GitHub or GitLab or whatever, Git repo that you have, to different scanning or security tools, to CI/CD platforms, to deployments and so on, all of them requires to have some kind of credentials sprawled across the entire production environment.

So what’s the main problem that we’re talking about? First of all, there are too many types of secrets, many of them to make life easier, are a privileged user. Meaning that they have permissive access to certain systems, and they’re not often rotated or modified, which means that they can last for weeks, months and maybe even years, without being rotated or changed.

And there is no good auditing around it, so you don’t actually know who uses a certain credential in order to access the specific system, and they could easily leak to different users or maybe malicious users, and from there, the way to a security problem is very short.

The reason that secrets management platforms were developed is mostly to protect the steady state, sensitive information like static secrets, in a way that would make the life of, or maybe accessing them much harder. For one, any type of access would require the user to be authenticated and authorized to access the secret. Secondly, you have a good way of auditing and tracking the activity, like which user or which application was the one trying to get the access to the credential.

Thirdly, the credential will be at the steady state, at rest will be encrypted, and only be decrypted by the application that requires it. Which means that there is very short period of time, in memory, where the credentials are being decrypted and used for whatever they needed to be used. So, what we’re talking about, the ultimate solution is to use a different kind of credentials, we call them just-in-time access or just-in-time credentials. In some terminology, they’re used as the dynamic secrets.

The concept behind it means that you don’t have any kind of username or password which are steady or static in that sense. Anytime an application or a user would need to access certain systems, they would authenticate to the secret management platform, which in turn, will generate dedicated credentials for this specific session. Those credentials will be ephemeral, in the sense that they have a TTL or a time to live, so they will expire after a predefined amount of time, and then they could not be used afterwards, after this TTL, at all. Which means that at the steady state, if nobody’s running or executing some kind of operation, there will be no credentials on the target systems, right?

So, let’s switch to a quick demo, what I have here is basically a demonstration of the SDLC lifecycle, right? So, this is kind of a very famous diagram that shows that you start with code, and then you’re building your application, you’re testing it, releasing deploying and so on, this is an infinite loop of development.

So, in our case, we’re going to see an application called ‘sample’, this is something, a very small application just for demonstration, written in Python. Then we’re going to use one of the Git repositories and CI/CD platforms called GitLab. This is just one of the systems that are familiar today. Later on, we’re going to make the CI/CD pipeline to deploy or basically build and deploy a docker image to Docker Hub registry. We’re going to use it in order to pull the application which is actually running on Amazon EKS, right? This is serving a web application which we can test and show.

All of those components will be secured in different manners by the Akeyless platform. So, the coding environment is actually running on a remote host, using short-lived SSH certificates to connect to. In GitLab, essentially, we’re adding dynamic credentials or just-in-time credentials to access different platforms like Docker Hub and Amazon EKS and so on, right? Same things in every place.

So, in Docker Hub, we will generate a temporary short-lived personal access token, in order to build and upload the image. The image obviously is a private image. With Amazon EKS, we would use dynamic credentials to generate an access token to the Kubernetes cluster. So, in order to authenticate to the EKS cluster, we’ll use short-lived tokens.

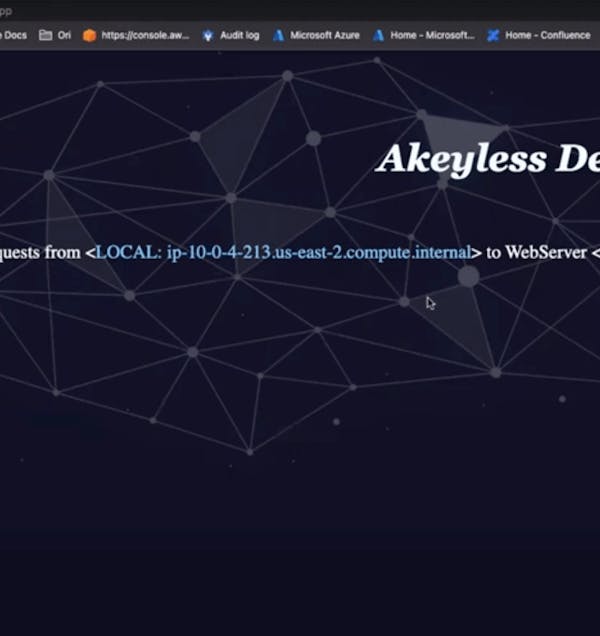

So, let’s switch to the demo. So, I’ll start with the code environment, maybe I’ll start with the application first of all. So, this is like a demo application that you can see here, it doesn’t do much, right? We just see the logo.

We see some requests that are coming to the application, the timestamp that it happened, and also the IP address, because we’re using a load balancer in our case, so we see basically the IP address of the load balancer itself. It’s meaningless in terms of the application, but just to show you what we’re seeing here. We also have this friend over here, which was supposed to play the guitar, but right now, it’s not moving much, right?

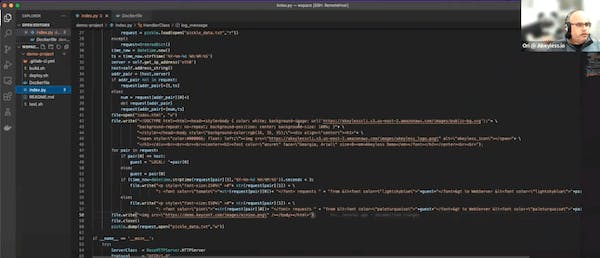

So, this is a sample code that I’m using. Essentially, it’s writing a HTML file. So, what I’m going to do here, just going to copy to modify that to this one. I’m just going to do some random code change, so I’m going to change the color of the Akeyless demo logo and I’m going to modify that, kind of to imitate that this was a bug fix, right? So, I’m saving the file, and I’m going to commit the changes to my source repo, which is I mentioned this in GitLab, right? I’m going to push changes to the repo.

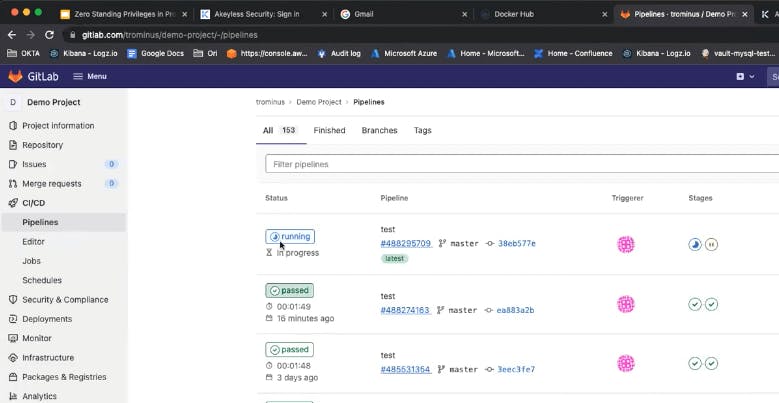

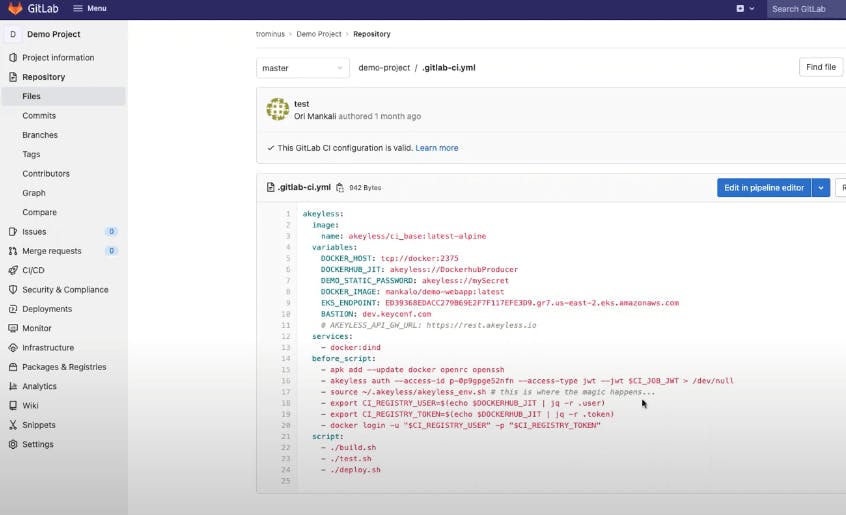

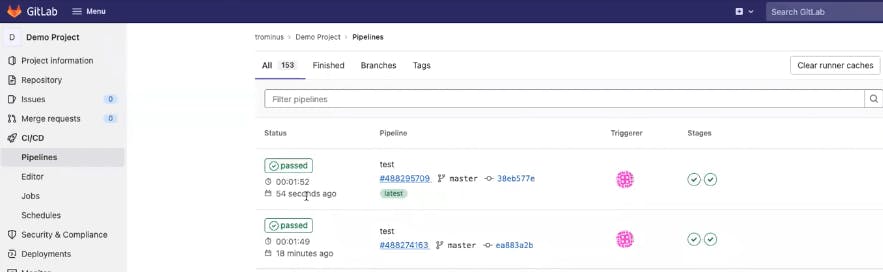

Whenever I’m doing that, but then automatically, I can see that there is a new pipeline job that was triggered automatically, right? If I look at that, we will see that right now the code will be pulled, and there will be some certain set of actions. So, I’m going to look very quickly at the repo, to show you what the pipeline is composed of.

Essentially, it’s using a base image that we provide, it can be any image to your choice, and then there are certain annotations as you can see inside the pipeline. For example, there is this annotation which is an environment variable that currently holds this value, so the Akeyless colon slash is a reference to a secret in Akeyless and there are other variables like the Docker image name and the EKS endpoint and other attributes, right? As you can see, there are no secrets in the code, not in the configuration files and not even stored on GitLab itself, okay.

So, the authentication process is using the JWT that is received by the GitLab infrastructure. This process is essentially validating that this job is authorized to access those secrets. If so, it will use the secrets, use the dynamic secrets, to perform whatever actions required like Docker login, and then building, and then testing and deploying, right?

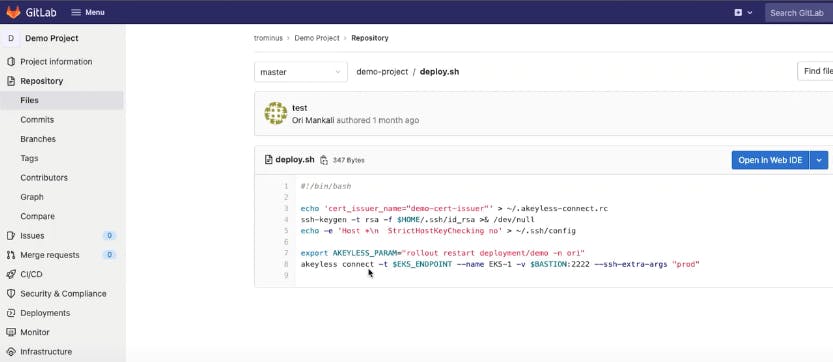

So, if I go back to the code, I could see what the build process looked like, it’s just simply ‘docker build’ and ‘docker push’, and then running the application and deploying it, right? So, it will be very simple to do that. This is how we do the deployment; it’s essentially using Akeyless to connect to the EKS cluster, and perform a rollout restart for the deployment.

All of that is done using just in time credentials, you don’t see any sensitive information in the code configuration file or anywhere else.

Now, if I look at Docker Hub, when I refresh the page, what I’m expected to see here is a temporary access token that was generated, like in this case, right? This was generated by the CI/CD platform, right? Just few seconds ago, right? Then when the job will be completed, this personal access token will be deleted.

Let’s look at the job and see what’s the status of it. We see that it passed like 54 seconds ago, and if I go to the application and refresh the page, now I can see the new color of the logo and this guy over here started playing, right?

If I go to the account, to the Akeyless account, to check the audit logs, I’m expecting to see all the activities that I had. So, I’m going to see, the filter by that. I’m going to see that there was an authentication, and they get dynamic secret to the EKS cluster, and there is another get dynamic secret operation. Let me filter by action, it would be easier to show that.

So, we see that there was an authentication from GitLab, to get a dynamic secret for Docker Hub producer, and then there was an access to the EKS cluster by your Kubernetes cluster, and all the activities documented and logged here, right? Those logs by the way can be forwarded to any existing log or SIEM system of your choice.

That’s about it. So essentially, I’m using a full SDLC cycle, without any static or any standing credentials in my code configuration or any environment that I have. Thank you very much for your time, and looking forward for the next session.